Tech & Innovation

1 Feb 2021

10 Min Read

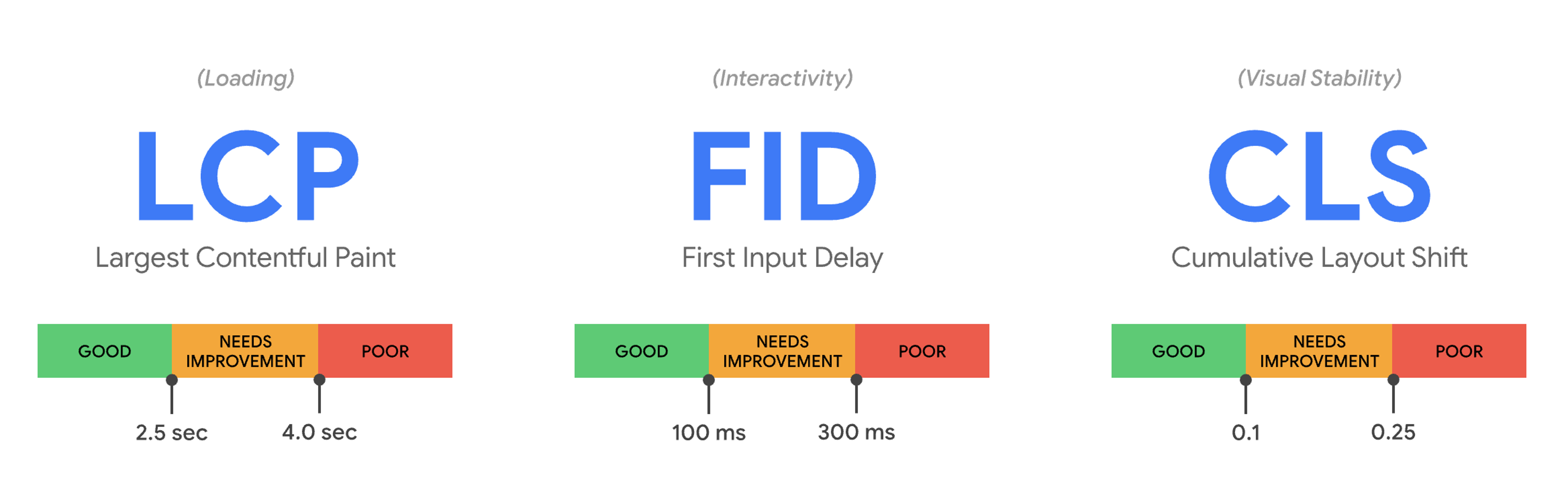

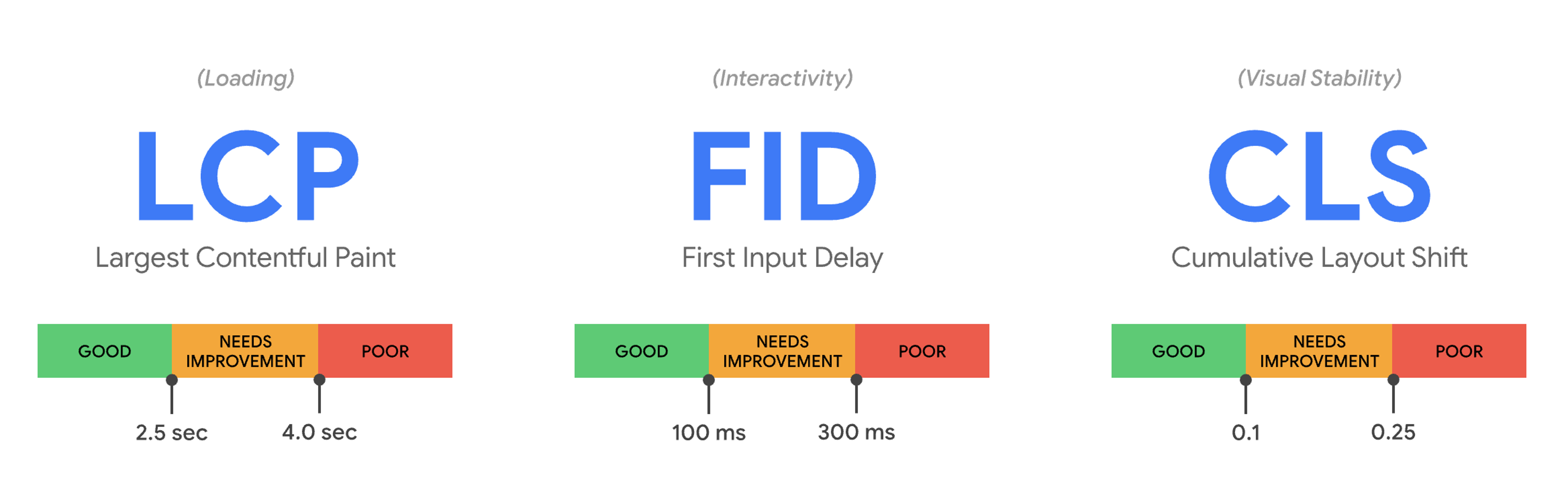

How to Prepare for Google's June 2021 Core Web Vitals Update

Tech & Innovation

Retail & Luxury Goods

4 Sept 2025

9 min read

News

5 May 2025

3 min read

News

17 May 2024

2 min read

Tech & Innovation

1 Feb 2021

10 Min Read

Tech & Innovation